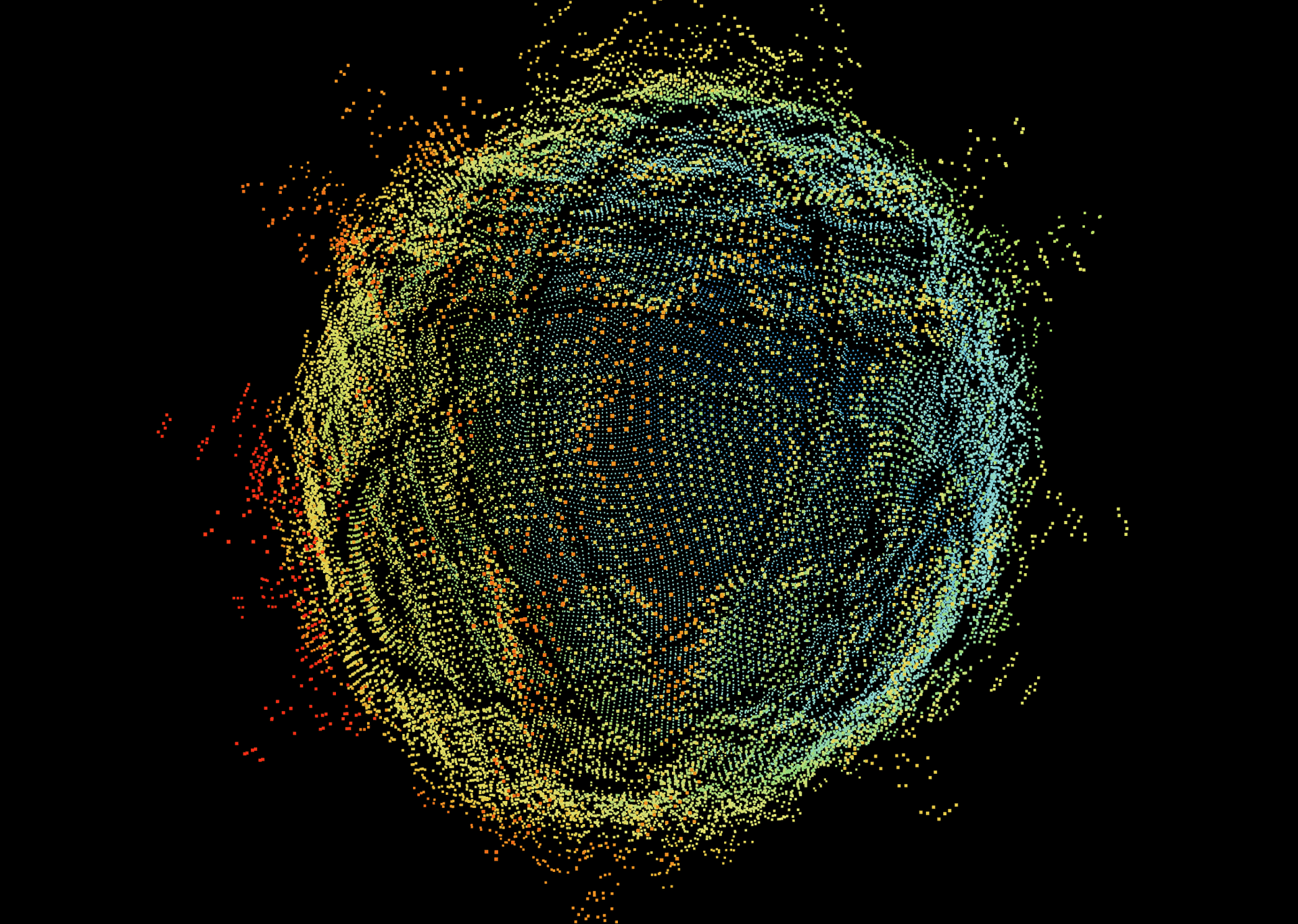

Audio Visualizer

Real-time 3D audio visualizer implemented with React Three Fiber.

Overview

This Audio Visualizer is a browser-native implementation of a custom real-time three-dimensional audio visualization algorithm. Live audio is captured and processed for analysis via the Web Audio API, and fed as input into the visualization algorithm. Any audio source can be used, and will drive the real-time deformation of a point cloud.

Setting aside the techniques used to extract and stage the audio for consumption, there are several defining components to the actual visualization process, which are agnostic to the web-based implementation:

- Initial creation and formation of the point cloud

- Point cloud pre-processing and metadata assignment

- Continuous audio-driven real-time deformation of the point cloud

Point Cloud Generation

The live version uses a procedurally generated point cloud designed for stability and readability in motion. The exact generation method and sampling strategy are intentionally omitted from this public page.

The renderer is shape-agnostic and supports alternative structures, but the current configuration prioritizes visual clarity and smooth response under real-time constraints.

Point Cloud Pre-Processing

Before playback, each point receives precomputed metadata that drives both positional and color response during animation. This preprocessing stage is optimized to keep per-frame work minimal and maintain low-latency interactivity with large point counts.

That metadata includes a per-point frequency assignment map, baseline visual state, and normalization data so updates remain stable across changing audio conditions. Specific mapping logic and tuning methods are intentionally withheld.

Runtime transforms are always applied relative to a stored baseline, which preserves continuity and helps the visualizer recover smoothly as the audio profile shifts.

Point Deformation Algorithm

At each frame, the engine combines multiple audio-reactive signals to drive radial motion, local detail, and color modulation across the cloud. The public page intentionally omits equation-level details and parameterization of the core deformation model.

- A slow structural motion layer for ambient continuity

- A low-end energy layer that reinforces perceived musical impact

- A global loudness response term for overall expansion

- A localized detail term for fine-grained surface articulation

The internal composition, weighting, and transfer functions are private. This keeps the public demo focused on the experience while preserving proprietary implementation details.

Color and motion are co-evolved so that visual intensity tracks audio intensity without destabilizing the composition. Exact color transition logic is also omitted.

Final presentation includes whole-scene motion and temporal smoothing to maintain coherence during sudden transients.

Web Implementation

I implemented the entire algorithm as a web page because I wanted to experiment and play around with the algorithm in real time, and make it easy and accessible for others to do the same. The live demo includes a user-friendly control panel exposed on the UI (built with Leva) that lets visitors tune visual behavior in real time. Orbit controls enable interactive camera rotation and zoom, and all settings are hot-reloaded so you can instantly see how each adjustment affects the visualization. I would encourage you to try it out yourself!

I also implemented a spectrogram you can use to inspect the audio signals that your browser is consuming from your selected audio source.

Looking Ahead

There is still a lot to explore in this project. In no particular order, some things I am still working on for this project are:

- Additional visual behavior modes and presets

- Improvements to color behavior and transitions

- Continued refinement of deformation quality

- Performance optimizations

- Expanded shape and scene configuration support

- Adding images and diagrams to this article (I realize the content is a bit dense as it stands...). Perhaps I'll expand the article to talk about the specific challenges and problems I faced with respect to the actual implementation of the algorithm in a web environment.

Technologies Used

- Core Stack – Next.js 14 (App Router), React 18, TypeScript; built on the T3 Stack with Tailwind CSS (scaffolded project-wide, not directly used in the visualizer). The main visualization logic is implemented in TypeScript, leveraging React hooks for state management and lifecycle handling.

- 3D Graphics – Three.js via

react-three-fiberfor declarative WebGL scene management and high-performance point-cloud rendering. - Audio Analysis – Web Audio API’s

AnalyserNodefor real-time FFT; customuseAudioStreamhook handles device selection, stream setup, and frequency data. - UI & Styling – Tailwind CSS utility classes and custom global styles; Leva for live parameter panels; Radix UI primitives for accessible dialogs/tooltips; Heroicons & react-icons for iconography.

- Utilities & Debugging - Modular math and color utilities implemented in TypeScript for displacement and hue logic;

StatsForNerdscomponent for runtime audio/visual stats. - Build & Documentation – Next.js build system with standard npm scripts; project deployed and hosted on Vercel.